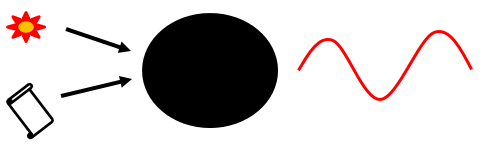

The cosmos, empty space, is known to be full of virtual particles. They appear in pairs to conserve momentum and then recombine after a short while. In 1976 Hawking showed that black hole event horizons can separate these pairs by trapping the one on the wrong side of the horizon. The star-crossed lovers are unable to recombine, so one of them becomes real and is emitted as Hawking radiation. This means that whereas black holes can hoover up information-full objects such as flowers and manuscripts (see below left), they can only emit thermal, random, Hawking radiation (see below right). This means the information in the flower and the manuscripts has been lost.

The debate is at the heart of physics, which has still not come to grips with the new concept of information, but let us see what empiricism and a little logic can offer. Landauer’s principle (reference 1) argues that when computer memory is erased say from the complex 11010 to the uniform 00000, then this is a loss of information, and a reduction of disorder or entropy, which cannot be allowed, so heat must be released. This heat has now been observed (ref 2) whereas the 'unitarity of the wavefunction' has not. One point for information loss. Another point is that quantised inertia (QI) and therefore the observed galaxy rotation without dark matter can be derived beautifully by assuming information loss (ref 3).

The picture is not complete though. In QI, if you accelerate, a horizon obscures your backwards view of the world, erasing information and providing, via Landauer, exactly the right amount of energy to fuel the inertial back-push (ref 3). However, if you stop accelerating, then that information comes back again. Where was it hiding in the meantime? The QI approach may offer a compromise here since accelerating objects see Unruh radiation that inertial (unaccelerating) observers do not. In QI information is in the eye of the beholder. Each object has its own informational universe, and what has been deleted in one may be retrieved by negotiation from another.

References

Landauer, R., 1961. Irreversibility and heat generation in the computing process. IBM J. Research and Development. 5, 3, 183-191.

Hong et al., 2016. Experimental test of Landauer's principle in single-bit operations on nanomagnetic memory bits. Science Advances, 2, 3, e1501492 Link

McCulloch, M.E., 2020. Quantised inertia, and galaxy rotation, from information theory. Adv. in Astrophysics, 5, 4, 92-94. Link

11 comments:

Mike - the ability to derive QI using information theory might imply that the number of Unruh wavelengths an object can see is actually the information itself. As regards information having mass, consider an encrypted store of data, such as a hard disk. If you don't have the decryption key, it looks like a random sequence of 0s and 1s, but if you know the right key it becomes usable information. Does the mass change when you are given the decryption key?

Computer memory may not be the best way of looking for the mass of information since the two states have different energy levels and thus a different mass anyway, and in order to be a reliable memory store there needs to be a higher energy-state between the two levels that thermal energy is (for a specified average time) insufficient to flip the memory state from one to the other. Since the actual kinetic energy available at a certain temperature is not fixed at 0.5kT per degree of freedom, and that is just the average energy with a lower limit of zero and an infinite upper limit, raising the height of the barrier between the two wells only reduces the chances of a memory-bit flip and cannot eliminate it. Thus in practice we choose the "hump" between the two wells based on how long we require that bit to remain as we set it, and the higher we set that hump the more-reliable the memory becomes for long-term storage. The energy we put in to change the bit needs to be above the hump, and as the memory settles in the other state that energy emerges again as heat (random-direction energy). We'll always have bit-rot, and the question is how much. I think Landauer may have missed that the kinetic energy of heat isn't 0.5kT but that's the average over time, with the actual energy at any point in time being random. Using an average number always loses information, and sometimes leads to a faulty inference.

Maybe the problem here is in the use of the term "information is deleted". In QI, the information is not deleted, but you simply can't see some of it while accelerating. Stop the acceleration, and you can again see it. Unlike Landauer, though, if that information is indeed the number of Unruh wavelengths, it's not an average number but instead precise and quantised.

For Black Holes, would it be reasonable to suggest that they entirely block Unruh waves rather than just a partial shielding or damping we get from normal matter? Since the rate of time goes to zero at the surface of the Black Hole, you cannot get a reflection of a wave. Not the same as a horizon where the node must happen, and effectively the wave is reflected. For a Black Hole, when we look at it the horizon would effectively be at infinity.

Simon: Good comment. The number of allowed Unruh waves seeable is indeed another way to derive QI and this was the first method I used - in my first paper (MNRAS, 2007). Later, Renda (2019) tackled it in a more detailed way. It is a good approach, because it predicts more complex behaviour that can be tested. The effects differ at very low accelerations. Of course I agree that the physics / information content cannot depend on the presence of a sentient being :)

who says that '0000' has less information than '1111'? they are both information, and both could be equally important, depending on the context

Unknown: No one says that. 0000 has the same information as 1111, but eg 1101 has more.

why does 1101 have more information than 1111? is that because there are more transitions? but it's not a stream of data with transitions, it's 4 independent memory cells that we assign to be logically grouped.

Also, to Simon, it's a convention that a 1 is a positive voltage and a 0 is no voltage, there is no reason why this could not be flipped.

and it you think that transitions == information, 1010101010101010 can be compressed to 8x10 with no loss of information, but a great reduction in the number of transitions

David Lang

David - in practical memory chips we often have flipped the voltage ranges that define a 0 and 1 (and an AND gate becomes a NOR gate when you flip the logic), but the underlying commonality is that it takes a certain amount of trigger-energy to cause the flip, that this energy is dissipated as heat after flipping the bit, and the lower that trigger-energy is the more likely it is to get flipped when you don't want it to be, by thermal energy. Thus there's no actual minimum energy associated with a memory bit, since it depends on how hot it is and how long you require it to remain stable. The time is always a half-life, too, and that bit you had intended to be archival may flip immediately after it's been written.

AFAIK, in information theory a totally random byte has more information than a less-random one. Thus the information is really the minimum number of bits you would need to encode that data using a perfect (lossless) zip algorithm. When the minimum number of bits you need to use is the same as the actual number of bits used, you've reached maximum information which means you know a minimum amount (because it's totally random). However, I haven't studied information theory, so someone who has can maybe correct if necessary. Still, this isn't information as a computer-nerd understands it.

If, however, we're talking about the number of Unruh wavelengths, then that is far more straightforward. It's all stuff we can count.

Hi Mike.

Your reflections on quantized inertia have allowed you to deduce the stability of rotating systems (such as galaxies) despite their apparent excessive rotation speeds. This reflects an old and intriguing observation of astrophysicists.

Another surprising and more recent observation of astrophysicists is that the matter of the cosmos (galaxies, clusters of galaxies, "black holes") seems to be concentrated on the surface and on the lines of intersection of vast zones almost empty of matter. It is as if these bubbles of space were pushing the massive matter away.

Do you have in mind a scenario that could connect this strange structuring of space to your theory on inertia?

Well, it looks like modified inertia is going mainstream.

Lenny Susskind gives the 2022 Oppenheimer Lecture.

THE 2022 OPPENHEIMER LECTURE: THE QUANTUM ORIGINS OF GRAVITY

https://www.youtube.com/watch?v=-OkwGDKoY0o

" In QI information is in the eye of the beholder. Each object has its own informational universe, and what has been deleted in one may be retrieved by negotiation from another."

Dear Mike, I agree with this hypothesis.

I feel like it's time for physics to include "the hard problem of consciousness".

A physical model of reality that cannot account for consciousness is describing only half of the picture, in a schizophrenic way...

This raises in return the problem of definition - what is consciousness - and we have today at least four competing hypothesis.

I tend to lean to the pan-psychic hypothesis, but in order to do that one most distinguish "consciousness" and "thoughts", which are different processes, the latter being mainly mental based ( this confusion leads to endless and pointless debates ).

A brief example: a tree is a sentient and conscious being, but has no thoughts at all.

Making a huge shortcut, I would say that any physical structure that opposes entropy has a certain level of "consciousness" or "sense of being". ( Our vocabulary is still too poor to express these new notions - accordingly misunderstandings might quickly arise ).

Therefore - to the point - consciousness is in my opinion the most important "field" in the universe, as it might permeate, infuse and perhaps precede everything "material". Analogy with Higgs field, just as a metaphor, could be useful.

In my view, consciousness can store information in higher dimensions. There are indeed other dimensions but we cannot sense, let alone comprehend them, with tools and concepts designed for our 3+1 dimensions. We get a bit metaphysical here, but science will eventually get there one day and these phenomenons will become mundane.

Accordingly, intuitively I feel that you're very close to a correct description of the process when you write: "Each object has its own informational universe, and (the information that) has been deleted in one may be retrieved by negotiation from another."

Welcome to the internet of consciousness, very funny to use! ;)

Kindest regards & a wonderful summer,

Alex

If we set a bit to zero (whatever its previous value) then we are destroying information, that is decreasing entropy, which has to be balanced by an increase in entropy which balances (plus, possibly a bit more) the entropy decrease. The heat generated carries the information of the previous value of the bit (but mixed up - if we knew the quantum phases etc we could reverse time and reconstruct from the heat the original value of the bit). There is no difference in overwriting a zero or a one, or always overwriting with a 1 or a zero, the issue is we go from a state which might be both to a single,known state.

This heat is the manifestation of the unitarity of the wavefunction.

Susskind proved if you add information to a BH it’s surface area increases. One bit of information increases surface area by a square Planck length. This has fascinated me no end since I learned about it.

Post a Comment